The Shift: AI Security in the Agentic Workspace Is Now a CX Priority

Customer Experience is undergoing a structural transformation.

It is no longer driven solely by human agents or deterministic software systems. Instead, it is increasingly shaped by autonomous, decision-capable systems powered by Generative AI and evolving forms of Agentic AI.

This shift—central to AI Security in the Agentic Workspace—introduces a new execution layer inside enterprises:

Humans + AI Agents + Data = Unified CX Surface

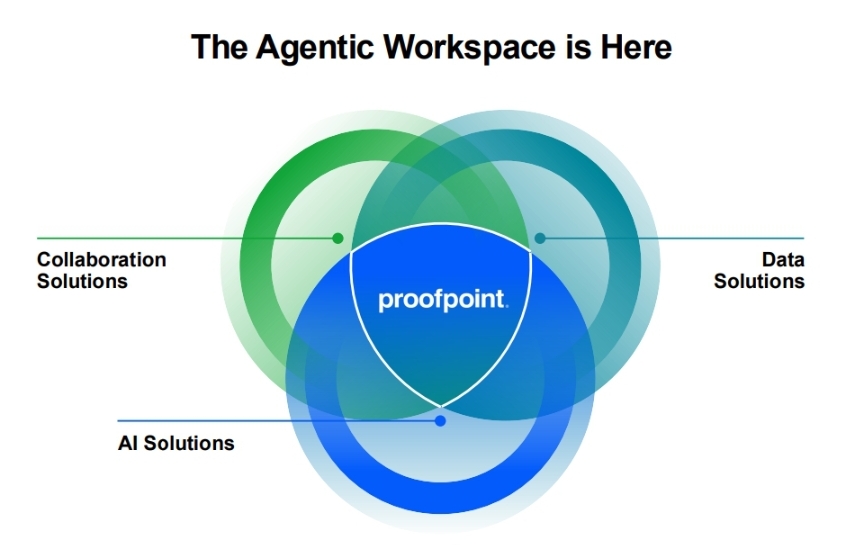

Companies like Proofpoint are framing this as a fundamental cybersecurity transition. But for CX leaders, this is something more critical:

A trust architecture challenge that directly impacts customer experience outcomes.

The Rise of Agentic CX: From Assistance to Autonomy

AI is no longer just assisting workflows—it is executing them.

Across APJ markets, signals from firms like Gartner and McKinsey & Company indicate:

- Rapid enterprise AI adoption across workflows

- Increasing integration of AI agents into operational systems

- A growing gap between AI deployment and governance maturity

This creates a new CX reality:

- AI writes responses

- AI accesses customer data

- AI triggers actions across systems

CX is no longer “designed and delivered”

It is executed dynamically by machines

Where CX Breaks: The Invisible Risk Layer

The core issue is not AI adoption.

It is uncontrolled AI behavior inside customer-facing journeys.

1. Shadow AI in CX Workflows

Employees increasingly use unauthorized AI tools to:

- Draft customer communication

- Process sensitive data

- Automate decision flows

Result: Zero visibility, fragmented CX governance

2. Data Leakage Through AI Systems

Modern AI systems:

- Interact with enterprise datasets

- Pull from CRM, support logs, and knowledge bases

- Operate without contextual data classification

This directly challenges traditional Data Loss Prevention models.

Result: Silent data exposure embedded within CX flows

3. AI Agents Acting Beyond Intent

Autonomous agents can:

- Execute workflows

- Access integrated systems

- Make real-time decisions

But often:

- Without contextual guardrails

- Without intent validation

Result: Misaligned CX actions at machine speed

4. Insider Risk Amplified by AI

AI dramatically enhances insider capabilities:

- Rapid data extraction and summarization

- Context-aware manipulation of sensitive information

Result: Faster, scalable, and harder-to-detect CX failures

The CX Consequence: Trust Erosion at Machine Scale

These risks do not remain technical.

They manifest directly in customer experience:

- Incorrect or hallucinated responses

- Exposure of private customer information

- Inconsistent or non-compliant interactions

- Loss of contextual accuracy

The outcome is systemic:

Trust erosion at scale

In the agentic era:

Your CX layer is your most exposed risk surface

Why Traditional Security Models Fail in Agentic CX

Legacy security frameworks were designed for:

- Human identity-based access

- Static permissions

- Predictable workflows

They are not built for:

- Autonomous AI agents

- Dynamic, real-time decisioning

- Cross-system interactions without explicit triggers

This creates a structural mismatch:

AI capability is accelerating faster than governance models can adapt

The New Model: Intent-Driven AI Security

To address this, a new paradigm is emerging:

Intent-Based AI Governance

Instead of asking:

- Who has access?

Organizations must ask:

- What is the system trying to do—and should it be allowed?

The CX Integrity Framework

A robust AI Security in the Agentic Workspace strategy must align:

- Intent → What action is being attempted

- Access → What systems/data are reachable

- Behavior → What actually happens in execution

CX integrity exists only when all three are aligned.

Decision Framework: What CX Leaders Must Do Now

This is no longer a future concern. It is an operational priority.

1. Discover the AI Footprint

- Map all AI tools in use (approved + shadow)

- Identify AI touchpoints across customer journeys

2. Establish CX-Centric Guardrails

- Define acceptable AI behavior in customer interactions

- Enforce policy at prompt, response, and action levels

3. Implement Real-Time Observability

- Monitor AI actions during execution

- Detect anomalies in behavior and outcomes

4. Enable AI Forensics

- Ensure traceability of every AI-driven interaction

- Build audit-ready CX systems

5. Converge CX, Security, and Data Functions

- Break organizational silos

- Define shared accountability for AI-driven experience

India & APJ Lens: The Governance Gap Widens

In markets like India and broader APJ:

- AI adoption is accelerating rapidly

- Regulatory frameworks (e.g., emerging data protection regimes) are evolving

- Enterprise governance maturity is inconsistent

This creates a high-risk environment:

- Rapid AI deployment without structured oversight

- Increased exposure to compliance violations

- Greater vulnerability to trust breakdowns

The implication:

APJ enterprises may experience AI-driven CX failures earlier and more intensely than mature markets

Competitive Signal: A New Cybersecurity Battleground

Vendors like are positioning around:

- Human-centric security

- Data-centric governance

- AI interaction control

But the broader landscape is converging:

- Cloud security platforms

- Identity and access providers

- Data protection ecosystems

This signals a category shift:

From cybersecurity → to AI experience governance

CXQuest Insight: CX Is Now a Security Discipline

This is the defining shift.

Customer Experience is no longer limited to:

- Design thinking

- Journey orchestration

- Personalization engines

It now includes:

- AI governance

- Data integrity

- Behavioral control systems

CX leaders are no longer just experience owners.

They are:

Trust architects in AI-mediated environments

CXQuest Take: The Agentic CX Risk Curve

We define this as:

The Agentic CX Risk Curve

As AI adoption increases:

- Efficiency rises linearly

- Risk escalates exponentially

Organizations that fail to govern this curve will face:

- Compounded CX failures

- Regulatory exposure

- Long-term trust erosion

Final Word: From AI Adoption to AI Accountability

The winners in the next era of CX will not be those who adopt AI the fastest.

They will be those who:

- Govern AI behavior

- Align AI with intent

- Protect customer trust at scale

Because in the age of AI Security in the Agentic Workspace:

Experience is no longer delivered.

It is executed—by systems acting on your behalf.

And that makes AI security not just a technical priority—

But a core CX mandate.