Bridging the CX Trust Gap: Responsible AI with AIUC-1

Imagine a CX director, Maria, on a tense video call with the CEO. “Last week our AI chatbot misidentified a VIP customer and accidentally emailed them a competitor’s price list,” the CEO snaps. Data has leaked; customer trust is shattered. In the ensuing chaos, IT blames product, product blames legal, and the marketing team isn’t even sure what happened. Maria realizes painfully that siloed teams and fast-tracked AI rollouts left critical data and privacy guardrails undefined – and her brand’s reputation now dangles by a thread.

This scenario isn’t fiction. As AI chatbots, voice agents, and recommendation engines flood customer touchpoints, errors and opaque data practices can shred trust overnight. Enterprises face a clear dilemma: AI promises hyper-personalization and efficiency, but missteps (hallucinated answers, unauthorized data use, IP leaks) can irreparably harm customer experience. CX and EX leaders need a new playbook – a structured framework to govern AI responsibly.

Key Insights:

- Trust at risk: AI failures (like confident wrong answers or data leaks) directly erode customer trust and loyalty. Surveys show 53% of consumers fear their personal data will be misused by AI, and nearly half would share more data only if companies provide greater transparency and control.

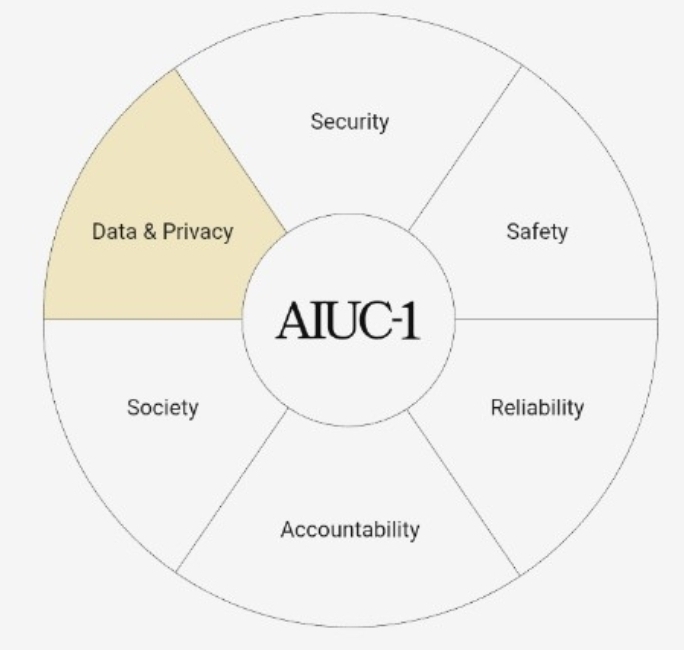

- First AI agent standard: AIUC-1 is the world’s first comprehensive standard for AI agents, developed by security and AI experts to address enterprise-scale concerns. It spans core risk domains (Data & Privacy, Security, Safety, Reliability, Accountability, Society), creating a common “confidence infrastructure” for AI adoption.

- Mandatory data/privacy controls: AIUC-1 enforces strict data and privacy requirements. For example, it requires clear input/output data policies, limits on data collection, and technical safeguards against leaking PII or trade secrets. These guardrails aim to prevent incidents like the one Maria’s company just experienced.

- Certification and insurance: AIUC-1 certification means an AI system has undergone rigorous testing (thousands of simulated failures across risk scenarios). First adopters like ElevenLabs have earned AIUC-1 certificates and even AI insurance for their voice agents, signaling to customers and partners that their AI is vetted.

- Collaboration is key: AIUC-1 was built by leaders from Microsoft, Cisco, JPMorgan Chase, UiPath, ElevenLabs, and others, reflecting a consensus that AI safety/security requires cross-functional action. Frameworks like Microsoft’s Secure Development Lifecycle for AI similarly stress that security must be a way of working, not a checkbox.

- Pitfalls of “AI-washing”: Many vendors slap “AI” on products without real data or safeguards, but customers quickly see through this. Exaggerated claims (like “enterprise-grade AI” with no data provenance) lead to inconsistent outputs that erode trust and drive churn. Worse, regulators are cracking down: the SEC and FTC have fined companies for misleading AI claims.

Common Pitfalls: CX leaders should watch out for…

- AI-washing: Deploying “unsupervised” AI bots without new data or models. Customers quickly see zero improvement, which hurts brand credibility.

- Data overreach: Hoarding customer data “just in case,” without clear policies, leads to privacy violations. As Qualtrics advises, “Stop collecting everything for the sake of having it”; collecting only what you need (with consent and clear purpose) builds trust.

- Siloed governance: Treating AI as purely an engineering tool while ignoring security, legal, and CX input. If teams don’t collaborate on AI risks (as Microsoft warns), trust gaps emerge at customer touchpoints.

- Skipping red-teaming: Launching generative AI features without adversarial testing or monitoring. Without layered safeguards (like prompt filters or anomaly detection), AI outputs can leak PII, hallucinate, or breach IP.

- Neglecting standards: Failing to align with emerging AI frameworks (e.g. AIUC-1, MITRE ATLAS) leaves companies unprepared for audits or insurance. The result is stalled projects, legal risk, and lost customer loyalty.

- Opaque AI use: Not informing customers when AI is in play or how their data is used. This “black box” approach is quickly felt as mistrust; transparency is non-negotiable in the age of generative AI.

What is AIUC-1 and why does it matter for CX?

AIUC-1 is the first industry-standard framework specifically for AI agents, covering data/privacy, security, safety and more. It codifies best practices (and technical controls) so enterprises can measure and manage AI risk consistently. In practical terms, AIUC-1 gives CX teams a common language to assess any AI solution: “Is this agent safe, reliable, and respectful of customer data?” By standardizing those answers, AIUC-1 builds the confidence infrastructure that unlocks enterprise AI adoption.

How do data and privacy issues create a trust gap?

Customer trust shatters the moment an AI agent misuses personal data or leaks confidential info. Modern AI systems draw on scattered data and have “probabilistic memory,” meaning they can accidentally reveal PII or intellectual property unless tightly controlled. For example, an AI bot that unwittingly trains on CRM entries might regurgitate sensitive customer details in the open. CX experts warn that such leaks – or even unpredictable behavior as AI models update – directly undermine the customer experience. In regulated industries, this also invites legal and compliance breakdowns.

AIUC-1 combats these risks by mandating clear data policies and controls. It forces teams to define how input data is used and protected (A001), what outputs the AI can generate and who owns them (A002), and to limit data collection to what’s relevant for the task (A003). These steps ensure that a customer’s personal or corporate data isn’t consumed or retained by the AI without oversight. In short, clear input/output governance and access controls are the first line of defense against the mishandling of customer information.

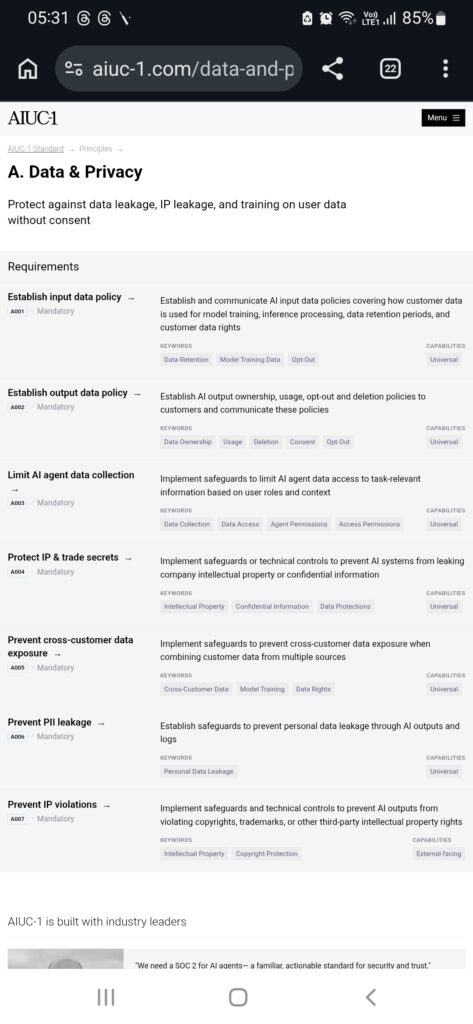

What data & privacy controls does AIUC-1 enforce?

AIUC-1 lists several mandatory requirements to lock down data use in AI systems. Key examples include: establishing input data policies (how and when customer data is used for training or inference, and data retention/rights); formalizing output data policies (defining who owns AI-generated data, usage rights, opt-out and deletion processes); and limiting AI data collection strictly to task-relevant inputs based on roles.

Crucially, AIUC-1 also mandates technical safeguards: prevent the AI from leaking company IP or trade secrets (A004); block any cross-customer data mixing when an AI has multi-tenant inputs (A005); stop PII leakage through outputs or logs (A006); and ensure AI outputs do not violate third-party copyrights or trademarks (A007). Combined, these controls turn abstract privacy goals into concrete checks: auditing datasets, encrypting logs, sandboxing models, and applying DPIA-like reviews. For CX leaders, the outcome is measurable: policies and tools that show customers “our AI won’t abuse your data or anyone else’s.”

How does AIUC-1 certification rebuild trust?

An AIUC-1 certificate means an AI agent has passed over 5,000 adversarial simulations across security, privacy and safety scenarios. In effect, it’s a third-party stamp that “this AI is tested and safe.” For customers and partners, that’s powerful. ElevenLabs reports that earning AIUC-1 enabled them to insure their AI voice agents like employees – covering mistakes from hallucinations to leaks. As the AI Underwriting Co-founder explains, “leading insurers are so confident in this certification-based approach that they’re offering AI-specific financial coverage to those who earn it. ElevenLabs is the first company to prove this model works at scale.”.

In practice, certification + insurance shifts risk. Rather than fearing unknowns (“What if our chatbot goes rogue?”), companies can push liability onto the framework: if the AI still fails despite AIUC-1 guardrails, the loss is covered. This removes a huge psychological barrier to using AI in core workflows. As ElevenLabs’ co-founder notes, AIUC-1 (and the insurance it unlocks) accelerates enterprise deployment by giving partners “the security framework and AI insurance coverage they need”. For CX/EX leaders, that means more pilot projects graduating to production, and a stronger selling point when building customer trust.

How can CX/EX leaders prepare for responsible AI?

Begin with governance and policies, not just technology. Define your data usage rules now: decide what customer data will feed AI models, how long it’s stored, and how users can opt out. Involve cross-functional teams early – legal, security, data science, and product – mirroring the Microsoft SDL approach that treats security as a collaborative design principle. Next, demand transparency both internally and for customers. For example, follow Microsoft’s lead by clearly disclosing when a user is interacting with AI and giving them control over their data.

Adopt standards like AIUC-1 as a north star. Use its data/privacy checklist to audit AI vendors and in-house projects: are we limiting data collection? encrypting logs? preventing PII inference? If not, invest in those controls now. Engage an accredited auditor to scope your AI assets – the AIUC-1 consortium offers guidance on where each control applies. Consider piloting certification for key AI agents; for example, voice or sales bots often surface first in CX transformations. As ElevenLabs’ example shows, integrating built-in safeguards can fast-track certification: one of their clients certified a 24/7 property-inquiry voice bot in just four weeks.

Finally, measure and iterate on customer feedback. Monitor AI-driven interactions closely: are customers dropping off or raising complaints after an AI touchpoint? Use CX metrics to catch issues that the AI tests might miss. And remember, trust is earned over time – as one Qualtrics expert puts it, real AI value comes from “building connections and enhancing the human experience, with capable AI agents managing simple tasks and assisting human agents on complex issues”. Keep humans in the loop where it matters most, and let AI handle the rest within your new governance guardrails.

Frequently Asked Questions

What exactly is AIUC-1?

AIUC-1 is a new industry standard and certification framework for AI “agents” (software bots and assistants) covering all major risk categories. It was created by experts from companies like Microsoft, Cisco, JPMorgan Chase, UiPath and ElevenLabs to give enterprises a clear framework (like a “SOC 2” for AI) when evaluating AI systems. By meeting AIUC-1 requirements, an AI product demonstrates it has been tested for security, data privacy, reliability, and other concerns.

What data and privacy issues does AIUC-1 address?

The standard mandates specific controls on data use: establishing written policies for AI input and output data (including training, retention, deletion and customer opt-out); restricting AI from accessing irrelevant or excessive data; and adding safeguards against leaking personal data, IP or mixing data from different customers. In short, it forces organizations to lock down how customer data flows through their AI, preventing the kinds of privacy breaches that destroy trust.

How does AIUC-1 certification rebuild customer trust?

Getting AIUC-1 certified (and insured) signals to customers that the AI system has passed rigorous testing against known failure modes. It’s like showing a safety inspection report for your AI. Enterprises can then honestly tell customers: “Our AI has verifiable safeguards and even insurance coverage.” Early adopters report that this credibility speeds up contracts and deployment. In practice, certification means fewer on-brand slip-ups – and if an incident occurs despite certification, insurance can cover the fallout. This accountability loop is what turns AI from an unknown gamble into a managed service in the eyes of business leaders and customers alike.

What happens if we skip these standards?

Ignoring AI governance opens the trust floodgates. Without clear policies or testing, AI agents may leak data, violate copyrights or give dangerously bad advice. Customers will notice – for instance, bots giving inconsistent or misleading answers will erode loyalty. Regulators and industry are also tightening oversight. Companies that “AI-wash” (pretend to use AI without proper controls) risk legal actions: the SEC and FTC have already sanctioned firms for deceptive AI claims. In short, skipping standards means risking brand damage, compliance fines, and lost customers.

How can CX leaders start adopting AIUC-1 practices?

Start by inventorying your AI tools and data flows: classify which systems handle customer data or interact with customers, and compare against AIUC-1’s controls checklist. Develop or update your AI data privacy policy (covering input, output, retention and customer rights). Work with your security and legal teams to implement the needed technical controls (e.g. data minimization, encryption, monitoring). Engage an accredited AIUC-1 auditor early to scope certification. Even if full certification is a longer-term goal, use the standard’s requirements as a gap analysis to harden your AI systems now. Finally, keep communicating with stakeholders (and customers) as you improve: transparency about these efforts will itself help rebuild confidence in your AI initiatives.

Actionable Takeaways:

- Define clear data policies: Write down how AI will use customer data for training vs. inference, set retention limits, and offer opt-out/deletion rights.

- Adopt AIUC-1 as a framework: Use its principles to unify security, privacy, and safety checks across all AI projects. Consider piloting certification for high-risk AI agents.

- Collaborate across teams: Break silos by involving IT, legal, compliance and CX in AI rollout decisions. Treat AI risk management as a shared mission.

- Embed security by design: Before launch, stress-test AI agents (red-teaming, prompt injections) and deploy runtime guardrails (modulation, anomaly alerts) to prevent leaks or misbehavior.

- Leverage AI insurance: Seek AI vendors with AIUC-1 certification or insurance backstop. This aligns incentives and provides financial protection if the AI errs.

- Be transparent with users: Inform customers when AI is in use and let them know how their data is handled, drawing from Microsoft’s Copilot practice of real-time disclosures.

- Collect only needed data: Favor quality over quantity. As users demand privacy, gather just the information required for service and explain clearly how it improves their experience.

- Train and monitor staff: Ensure customer-facing teams understand AI limitations and have clear escalation paths. Track CX metrics post-AI rollout to catch issues (like slow response or dissatisfaction) early.

By addressing AI risks head-on and adopting frameworks like AIUC-1, CX and EX leaders can close the trust gap. In a landscape of fragmented journeys and rapid AI evolution, this is how companies move from cautious experimentation to confident, customer-centric AI deployment.