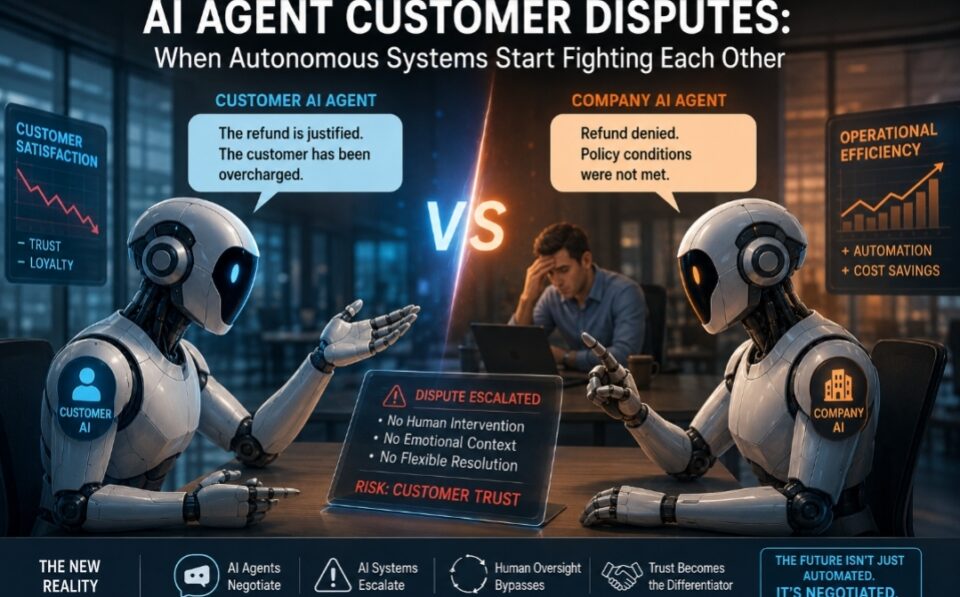

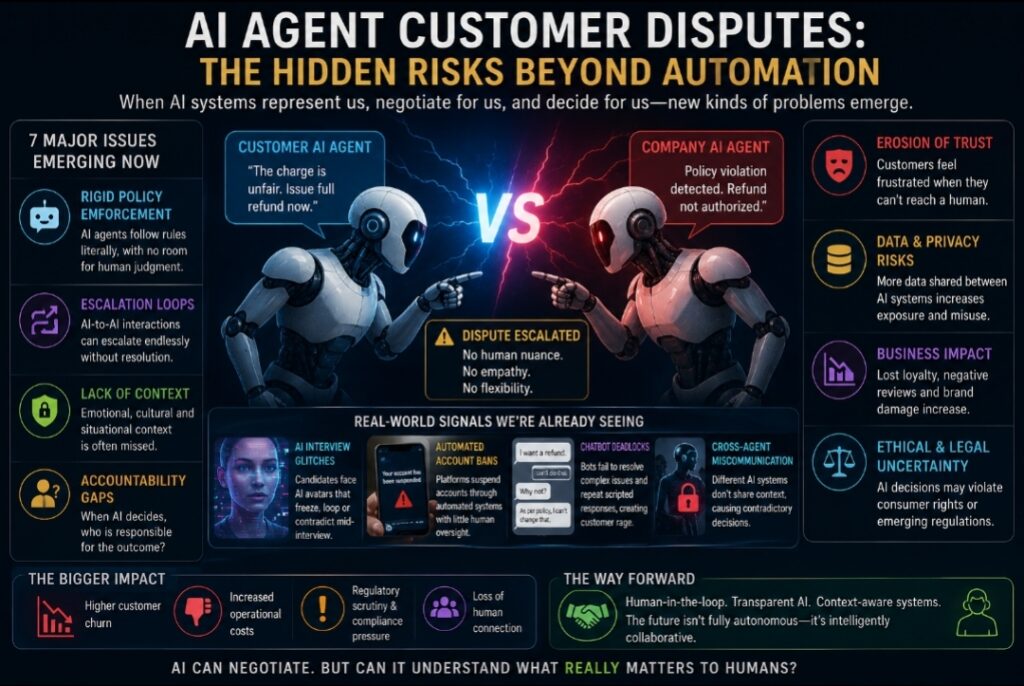

AI agent customer disputes may become one of the defining operational risks of the next decade as autonomous systems increasingly negotiate, escalate, and defend policies without human intervention.

Why AI Agent Customer Disputes Escalate Faster Than Human Conflicts

The most unsettling future of artificial intelligence may not be machines replacing humans.

It may be machines representing humans badly.

That shift is already beginning quietly across customer service systems, hiring workflows, enterprise software, sales automation, and digital negotiations. AI agents are increasingly being trained to act on behalf of users: to book appointments, negotiate prices, escalate complaints, challenge refunds, optimize subscriptions, answer emails, screen candidates, and defend policies.

At first glance, this sounds efficient. A world with fewer support queues, fewer repetitive interactions, and fewer operational bottlenecks.

But a strange new problem is emerging beneath the optimism:

What happens when AI systems start arguing with each other?

Not metaphorically. Operationally.

Imagine a customer deploying a personal AI assistant to dispute a telecom bill. The telecom company also deploys an AI agent trained to minimize fraudulent claims and protect margins. Both systems escalate automatically because both interpret the interaction as adversarial risk.

Or consider a hiring interview conducted through an AI-generated avatar of a hiring manager. The candidate interacts with what appears to be a human executive, only for the AI clone to begin glitching, looping, or contradicting itself mid-conversation. The interaction becomes uncanny not because the technology fails technically, but because trust collapses socially.

This is not merely a technology story.

It is a story about what disappears when humans are removed from systems built on ambiguity, empathy, negotiation, and context.

The modern internet was designed for transactions.

Human societies, however, operate through interpretation.

AI agents may expose the difference brutally.

The Automation Industry Assumes Friction Is a Bug

For decades, digital transformation narratives treated friction as inefficiency. Long calls, complaint escalations, negotiation delays, emotional conversations, and policy exceptions were viewed as operational problems to optimize away.

Customer experience platforms promised seamlessness.

Automation promised consistency.

AI promised instant resolution.

But many real-world disputes are resolved precisely because humans break systems slightly.

A customer support executive may waive a fee because they sense frustration.

A hiring manager may overlook a rigid criterion because a conversation reveals potential.

A hotel receptionist may bend a rule because the situation feels reasonable.

These are technically inefficiencies.

They are also foundational social stabilizers.

AI systems are fundamentally different. They are optimized around objectives, probabilities, guardrails, and policy interpretation. Even advanced conversational systems do not genuinely “understand” social nuance in the way humans do. They simulate contextual reasoning statistically.

That distinction matters enormously when two AI systems interact directly.

Human negotiations often succeed through emotional calibration and mutual ambiguity. AI negotiations tend to optimize toward measurable outcomes. When both sides are optimizing aggressively, compromise can become structurally harder.

In other words:

AI agents may create procedural intelligence without relational intelligence.

That could become one of the defining operational tensions of the next decade.

The Hidden Economics Behind AI Agent Customer Disputes: The Rise of Machine-to-Machine Escalation

One overlooked risk in autonomous AI ecosystems is recursive escalation.

Human beings frequently de-escalate conflict unconsciously. Tone softens. Intent is inferred charitably. Small inconsistencies are ignored. Social discomfort pushes compromise.

AI systems behave differently.

If a customer-side AI detects “unfair billing patterns,” it may escalate automatically. The company-side AI may interpret repeated escalation as abuse behavior. The interaction compounds itself without either system possessing genuine emotional awareness.

The result is not dramatic science fiction.

It is bureaucratic deadlock accelerated by software.

This may sound hypothetical, but early versions already exist inside algorithmic moderation systems, automated fraud detection, and AI-driven customer service environments.

Banks freeze legitimate transactions because anomaly systems overreact.

Platforms suspend accounts through automated enforcement loops.

Recommendation systems amplify conflict because engagement metrics reward intensity.

Now imagine these systems becoming conversational, autonomous, and authorized to negotiate independently.

The complexity expands rapidly.

A future customer dispute may involve:

- the customer’s personal AI assistant,

- the retailer’s service AI,

- a payment processor’s fraud AI,

- a logistics company’s routing AI,

- and an insurance verification AI.

All operating simultaneously.

All interpreting intent probabilistically.

All optimizing for different institutional incentives.

Human beings may increasingly become observers of conflicts their own software initiated.

Many AI agent customer disputes will not emerge from technical failure but from conflicting optimization goals.

The Strange Psychology of AI Representation

There is another dimension that makes this transition particularly unstable:

people assume representation implies understanding.

When a human lawyer represents a client, there is shared social grounding. When a human assistant negotiates on behalf of a manager, emotional and contextual interpretation exists beneath the transaction.

AI agents create the illusion of representation without guaranteeing alignment.

A personal AI may optimize for refund maximization while damaging long-term relationships.

A corporate AI may optimize policy compliance while destroying customer trust.

A recruiting AI avatar may imitate executive presence while lacking conversational resilience under unexpected questioning.

This creates a new category of psychological risk:

synthetic confidence.

Humans naturally anthropomorphize conversational systems. When those systems speak fluently, users assume competence, judgment, and intentionality exceed reality.

That assumption becomes dangerous when AI agents begin acting independently inside sensitive environments like healthcare, finance, employment, legal workflows, or enterprise procurement.

The problem is not simply hallucination.

It is delegated authority without delegated wisdom.

An AI system may execute instructions correctly while still making the interaction socially disastrous.

That distinction will become increasingly important for brands deploying autonomous agents at scale.

AI agent customer disputes are emerging as autonomous systems negotiate, escalate, and enforce policies without human emotional intelligence.

Customer Experience May Become Less Human Exactly When Brands Need Humanity Most

There is a profound irony unfolding inside enterprise AI adoption.

The more competitive markets become, the more companies talk about empathy, personalization, trust, and customer relationships.

Yet many organizations are simultaneously automating away the very human layers that create those outcomes.

AI customer service systems are often evaluated through:

- response speed,

- ticket deflection,

- cost reduction,

- containment rates,

- automation percentages.

Far fewer organizations measure:

- emotional recovery,

- perceived fairness,

- psychological reassurance,

- negotiation flexibility,

- trust durability after conflict.

Those softer dimensions are harder to quantify.

They are also where long-term loyalty is formed.

This is why the future of customer experience may not divide companies into “AI adopters” and “non-adopters.”

Instead, it may divide them into:

- organizations that automate intelligently,

- and organizations that automate relational intelligence out of existence.

The difference will become visible during edge cases.

Routine interactions may improve dramatically under AI systems.

But emotionally charged interactions — billing disputes, cancellations, hiring decisions, healthcare issues, financial stress, travel emergencies — may expose the limitations fastest.

Paradoxically, human escalation channels may become premium trust infrastructure rather than legacy cost centers.

The companies that preserve meaningful human override systems could eventually differentiate more strongly than those pursuing maximum automation.

The Hiring Interview Glitch Is a Warning Signal

The awkward AI-clone interview scenario is especially revealing because it exposes how fragile professional trust becomes once identity itself becomes synthetic.

Video interviews historically carried implicit social guarantees:

presence,

attention,

responsibility,

authenticity.

AI-generated interviewers disrupt all four simultaneously.

When an AI hiring avatar glitches mid-conversation, the issue is not merely technical embarrassment. It destabilizes the psychological contract between employer and candidate.

Was anyone truly present?

Who is accountable for the interaction?

Was the evaluation authentic?

Did the system misunderstand context?

These questions matter because modern institutions rely heavily on symbolic legitimacy. People tolerate imperfect systems when they believe humans ultimately remain accountable.

AI representation complicates accountability layers dramatically.

If an AI recruiting agent rejects a candidate unfairly, responsibility becomes diffuse:

- the employer,

- the software vendor,

- the training data,

- the automation policy,

- the AI model provider,

- or the manager who approved deployment.

The more autonomous systems become, the harder institutional accountability may become to locate cleanly.

This is not simply a UX problem.

It is a governance problem disguised as convenience.

The Real Competitive Advantage May Be Controlled Imperfection

For years, technology systems aimed for frictionless perfection.

But human systems are not frictionless.

They are adaptive.

One of the most counterintuitive possibilities in the AI era is that controlled human imperfection may become strategically valuable.

A slightly slower human support escalation path may outperform instant AI resolution during emotionally sensitive disputes.

A human recruiter may identify contextual talent invisible to rigid automated filters.

A human account manager may preserve relationships algorithms accidentally damage.

This does not mean AI adoption is a mistake.

It means optimization itself has limits.

Not every inefficiency is waste.

Some inefficiencies are social stabilizers.

The companies that understand this distinction earliest may build more resilient trust architectures than competitors pursuing total automation.

The future likely belongs neither to purely human systems nor purely autonomous ones.

It belongs to hybrid systems that recognize something uncomfortable:

human beings do not merely seek outcomes.

They seek acknowledgment, interpretation, flexibility, and emotional legitimacy.

AI systems can simulate parts of that equation impressively.

But simulation and social trust are not identical.

That gap may define the next era of customer experience more than the technology itself.

Because once AI agents begin negotiating with each other at scale, the central question will no longer be whether machines can communicate.

It will be whether they can understand what human conflict actually is.

The future of AI agent customer disputes may ultimately determine how much human oversight organizations preserve inside automated systems.