Krisp has introduced Listener-side Accent Conversion, a real-time voice AI technology designed to improve how accented English is understood in live conversations.

Can AI Fix the “Sorry, Could You Repeat That?” Problem in Global CX?

Imagine this.

A customer in London calls a support center in Manila. The agent speaks fluent English. The customer does too. Yet both repeat themselves several times. The issue is not language. It’s accent comprehension.

The call stretches longer. The customer grows impatient. The agent feels stressed. Resolution slows down.

This everyday scenario quietly affects global customer experience operations.

Now a new voice AI innovation aims to solve this challenge at the listener level.

Krisp has launched Listener-side Accent Conversion, a technology designed to improve comprehension across meetings, contact centers, and voice AI systems.

For CX and EX leaders managing distributed teams, the implications are significant.

What Is Listener-Side Accent Conversion and Why Does CX Need It?

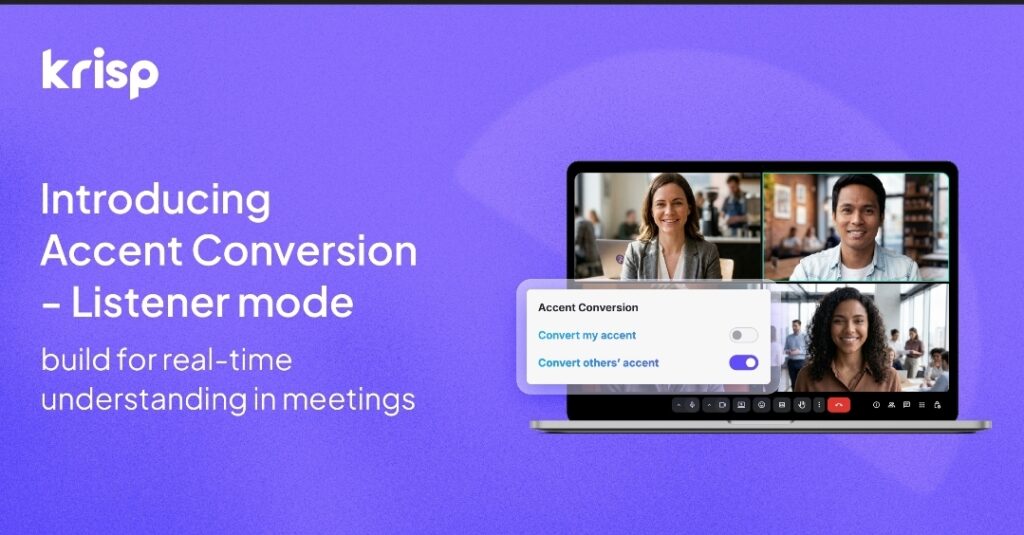

Listener-side Accent Conversion adapts incoming speech in real time to make accents easier for the listener to understand. It preserves the speaker’s natural voice while clarifying commonly misheard sounds.

Unlike traditional speech tools, this system does not change how someone speaks. Instead, it optimizes what the listener hears.

This distinction matters in modern customer experience ecosystems.

For years, voice technology focused on improving sound quality. Noise cancellation removed background sounds. Transcription captured spoken words.

Yet comprehension challenges persisted.

Accent variation often slowed conversations across global teams, customer calls, and AI systems.

The new approach addresses the comprehension layer of communication.

Why Are Accents a Growing CX Challenge?

Globalization transformed customer support models. Organizations now operate distributed teams across continents.

However, accent diversity introduces friction.

Consider three common environments:

1. Global meetings

Teams across regions often repeat phrases or slow conversations to ensure clarity.

2. Contact centers

Agents process dozens of accents daily. This increases cognitive fatigue and call duration.

3. Voice AI agents

Speech recognition systems struggle with accent variability, reducing automation success rates.

These issues may appear small individually. But at scale, they impact key CX metrics:

- Average Handle Time (AHT)

- First Call Resolution

- Customer Satisfaction

- Agent Cognitive Load

Voice is becoming the primary interface for digital interaction. Comprehension now sits at the center of experience design.

What Makes Krisp’s Approach Different?

The innovation lies in where the technology operates.

Traditional accent solutions often attempt to modify the speaker’s output. This can sound unnatural or intrusive.

Krisp’s system focuses on the listener experience instead.

Key technical characteristics include:

- Phoneme-level processing for precise sound adjustment

- Real-time audio adaptation without transcript generation

- On-device processing for privacy and performance

- Latency under 200 milliseconds, imperceptible to humans

- No storage of raw audio

This architecture allows speech to remain authentic while improving clarity.

According to Arto Minasyan, the technology emerged from personal experience.

“I’ve spent more than 20 years working in tech with an Armenian accent. I know what it feels like to repeat yourself on a call… communication should be about ideas, not decoding speech.”

This perspective highlights a deeper issue in workplace communication.

Accent comprehension affects confidence, participation, and inclusion.

How Does Accent Conversion Improve Contact Center Performance?

Listener-side Accent Conversion can directly influence operational metrics inside CX organizations.

Here are several practical impacts.

Reduced repetition

Agents and customers spend less time clarifying words.

Lower cognitive load

Agents processing multiple accents experience less mental strain.

Faster resolution times

Clear communication speeds troubleshooting and problem solving.

Higher customer satisfaction

Customers feel understood without needing to adjust their natural speech.

Davit Baghdasaryan explains the operational impact clearly.

“Agents process multiple accents all day, often in a second language. That adds friction, time, and cognitive load to every interaction.”

By improving comprehension at the listener level, organizations can reduce that friction without changing customer behavior.

How Does the Technology Work in Real Environments?

Krisp designed the system to function across multiple communication layers.

Meetings

The feature is available through Krisp’s Voice AI for Meetings application on Mac and Windows.

Teams can understand global colleagues more easily during live discussions.

Contact centers

Integration into Krisp’s Call Center AI platform will enhance what agents hear during live calls.

This directly supports faster resolutions and improved customer interactions.

Voice AI development

The company is also preparing an SDK for developers.

This allows organizations to embed accent clarity into voice assistants, automated support agents, and AI-driven communication systems.

Why Listener-Side Processing Matters for Privacy

Voice AI adoption often raises data security concerns.

Krisp’s architecture addresses this with local processing.

Key privacy principles include:

- No raw audio storage

- On-device processing

- No reliance on transcripts

- Minimal data exposure

For enterprises handling sensitive conversations, this design reduces compliance risks.

It also aligns with the growing trend of edge AI deployment.

How Accurate Is Accent Adaptation Across Regions?

Accent conversion models rely on diverse training data.

Krisp’s models currently deliver strong comprehension improvements across:

- Indian English accents

- Filipino English accents

- Latin American English accents

- African English accents

- Chinese-Mandarin influenced English

Coverage continues expanding as models learn from additional datasets.

This global approach reflects modern CX realities. Many organizations serve customers across dozens of linguistic backgrounds.

Key Insights for CX Leaders

Several strategic insights emerge from this innovation.

Accent comprehension is becoming a system-level requirement.

Voice interactions dominate customer support. Miscommunication now directly affects CX performance.

AI must enhance understanding, not replace people.

Technologies that assist human agents deliver stronger adoption.

Real-time processing is critical.

Delayed translation or transcription fails during live conversations.

Voice interfaces are evolving rapidly.

From noise cancellation to speech translation, the next frontier is comprehension.

Common Pitfalls When Deploying Voice AI in CX

Even powerful technology can fail if implementation lacks strategy.

CX leaders should avoid several mistakes.

Over-automation

Voice AI should assist agents, not eliminate human judgment.

Ignoring agent experience

Tools must reduce workload, not introduce complexity.

Insufficient training

Agents need onboarding to understand new voice capabilities.

Fragmented deployment

Voice AI must integrate with existing CX platforms and workflows.

Successful implementations treat voice technology as part of a broader experience strategy.

How Should CX Teams Evaluate Accent AI Tools?

Before adoption, organizations should assess several factors.

| Evaluation Area | Key Questions |

|---|---|

| Latency | Does the system operate in real time? |

| Privacy | Is audio processed locally? |

| Accent coverage | Does it support global customers? |

| Integration | Can it connect with CX platforms? |

| Agent feedback | Do agents report reduced cognitive load? |

These criteria help determine whether voice AI truly improves the experience.

Frequently Asked Questions

How does accent conversion differ from speech translation?

Accent conversion keeps the same language while clarifying pronunciation. Translation converts speech into another language entirely.

Can accent AI improve customer satisfaction?

Yes. Clearer communication reduces frustration, repetition, and misunderstanding during support interactions.

Does accent conversion change the speaker’s voice?

No. Listener-side systems preserve the speaker’s natural voice and tone.

Will this replace human agents?

No. The goal is to assist agents by improving comprehension and reducing cognitive strain.

Is accent AI useful for internal collaboration?

Absolutely. Global teams benefit from smoother meetings and faster decision-making.

Can developers integrate accent conversion into apps?

Yes. Krisp plans to provide SDK access for voice AI agents and applications.

Actionable Takeaways for CX Leaders

- Audit voice interactions. Identify where accent misunderstandings slow conversations.

- Measure cognitive load. Evaluate how many accents agents process daily.

- Pilot listener-side tools. Test accent AI in small agent teams before full deployment.

- Monitor CX metrics. Track AHT, resolution rates, and CSAT after implementation.

- Train agents properly. Introduce new voice capabilities with structured onboarding.

- Prioritize privacy. Choose solutions with on-device processing and minimal data storage.

- Integrate voice AI with CX platforms. Ensure seamless workflow compatibility.

- Treat comprehension as CX infrastructure. Communication clarity should be built into the system.

Accent diversity reflects the global nature of modern business. It also reveals hidden friction inside communication systems.

Technologies like listener-side accent conversion signal a new direction for voice AI.

Instead of changing how people speak, the system adapts how conversations are understood.

For CX and EX leaders, that shift could transform the quality, speed, and inclusiveness of global communication.